Your model performed well in testing. In production, accuracy drops 15% within two weeks. The sensors haven’t changed. The model hasn’t changed. But someone updated the preprocessing script three days ago, and nobody tracked what that change did to feature distributions.

This isn’t a model problem. It’s a readiness problem.

Preparing sensor data for ML isn’t a single operation between collection and training. It’s a sequence of decisions: how signals are aligned, how missing data is handled, how noise is treated, how transformations are tracked. When these decisions are inconsistent, even well-designed models fail.

This article outlines a practical framework for preparing time-series data for machine learning—one that emphasizes consistency, traceability, and production readiness.

Why Clean Data Isn’t Enough

Many teams start with a familiar goal: clean the data. Remove errors, fill missing values, resample, normalize. If the dataset looks reasonable and the model trains, the data is considered “ready.”

Cleanliness isn’t readiness.

A dataset can be clean and still:

- Encode inconsistent assumptions

- Break when new data arrives

- Produce unstable predictions

- Be impossible to reproduce

ML readiness isn’t about how data looks. It’s about how reliably it supports learning, evaluation, deployment, and iteration.

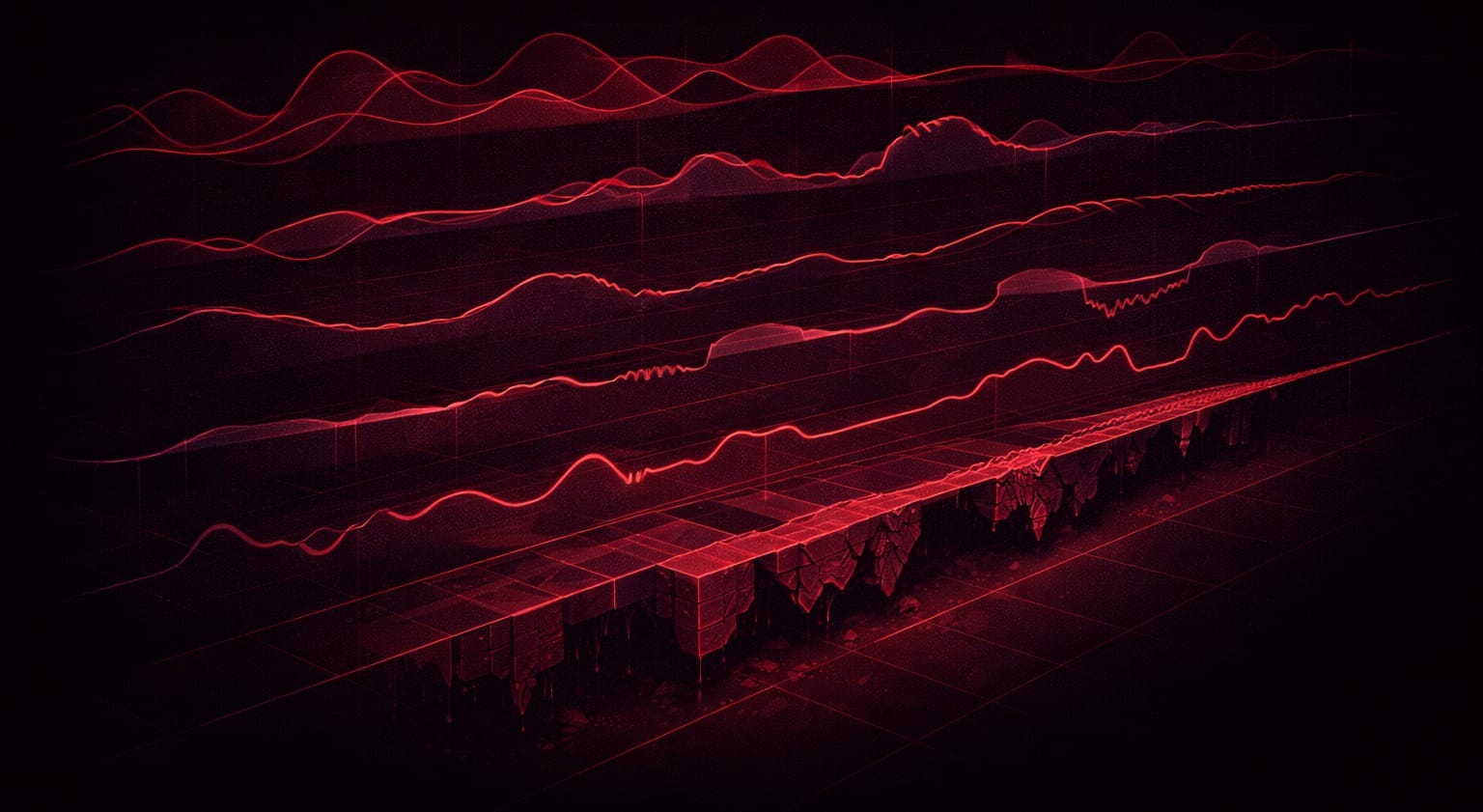

ML Readiness Is a Pipeline Property

ML readiness isn’t a property of a dataset. It’s a property of the pipeline that produces it.

A dataset extracted once, cleaned manually, and handed to a model may work for a prototype. It rarely survives production: new sensors, changing conditions, missing data, evolving requirements.

A ready pipeline produces datasets that are:

- Consistent across runs

- Stable under minor input variations

- Traceable to raw sources

- Aligned with downstream modeling assumptions

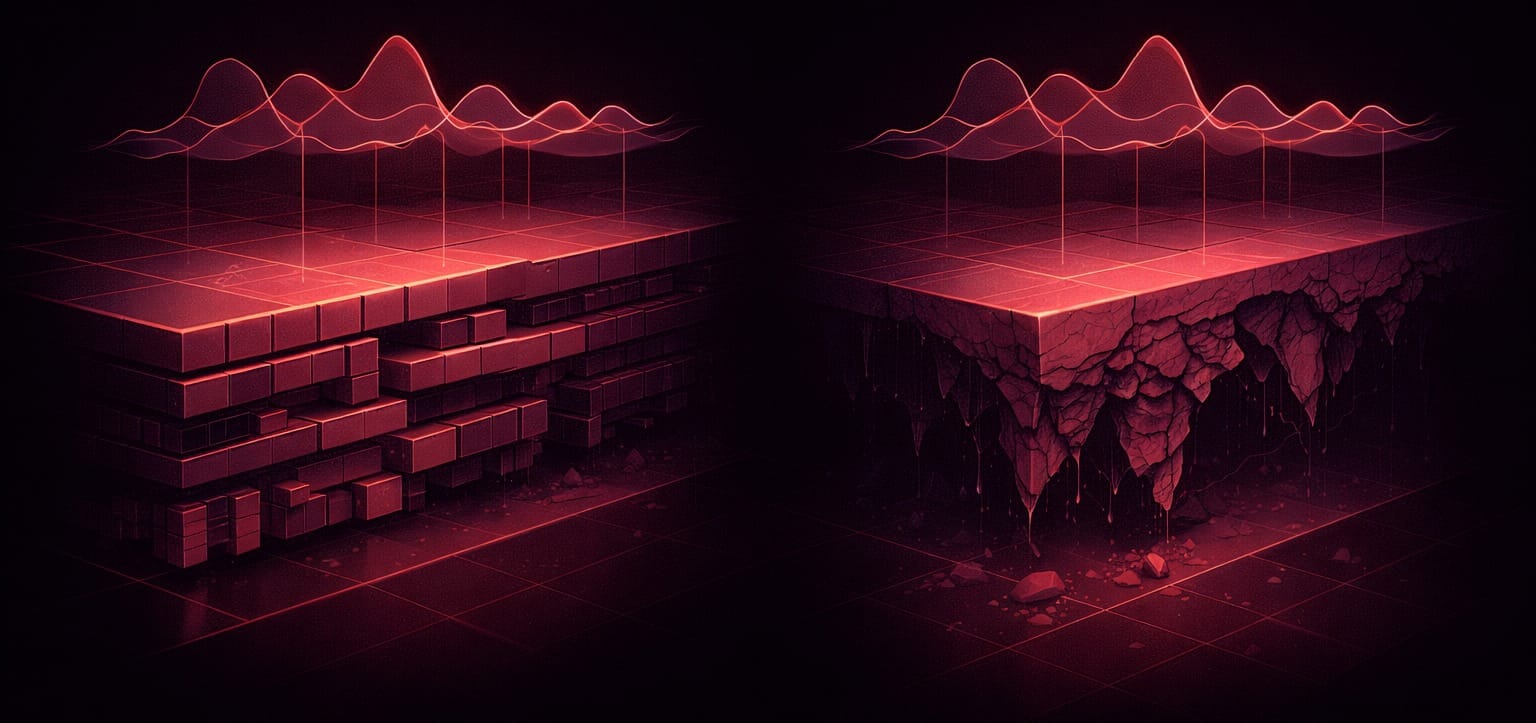

The Reality of Sensor Data

Real-world sensor data is messy in predictable ways. Sampling rates change. Sensors drift. Clocks skew. Values saturate. Channels disappear temporarily. New signals are added while old ones are deprecated.

None of this is exceptional. It’s normal.

Pipelines that assume static conditions fail quietly. They continue producing outputs, but those outputs lose alignment with reality. Models trained on such data perform well historically and poorly prospectively.

Preparing data for ML means designing pipelines that anticipate change.

Common ML Readiness Failures

These failures appear constantly in production systems:

- Training-serving skew. Preprocessing differs between training and deployment, causing models to see different feature distributions.

- Normalization with future data. Statistics computed on the full dataset, including test data, leak information unavailable at inference time.

- Unversioned preprocessing. Changes to scripts aren’t tracked, making earlier results impossible to reproduce.

- Inconsistent gap handling. Different signals use different filling strategies, introducing biases models exploit.

- Labels misaligned with preprocessing. Signals are resampled or filtered, but labels reference the old data structure.

These don’t cause immediate crashes. They show up as gradual degradation, unexplained inconsistencies, or models that “mostly work.”

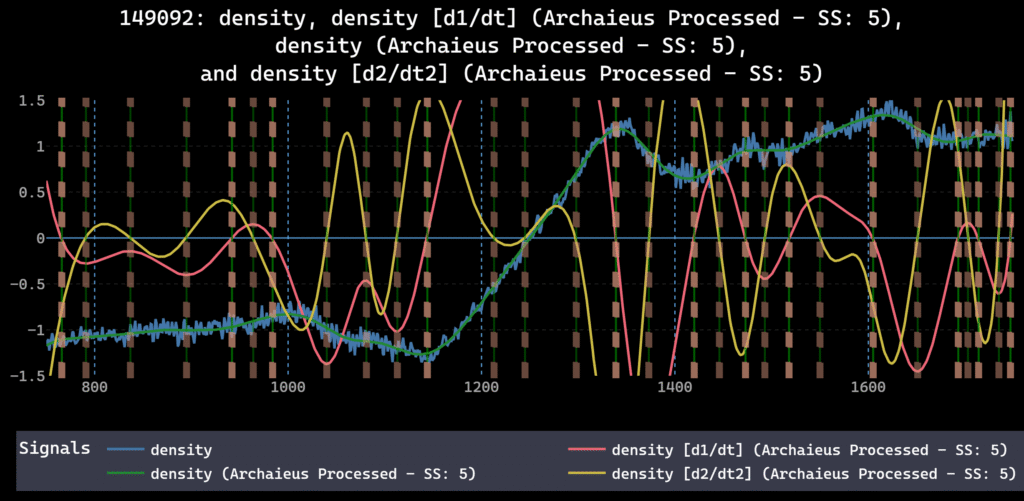

Consistency Across Signals and Time

A core requirement of ML readiness is consistency.

Signals must be treated consistently with respect to:

- Alignment and timing

- Gap handling

- Resampling strategy

- Smoothing and filtering

- Normalization and scaling

Inconsistent handling across signals introduces biases. Inconsistent handling over time makes results impossible to compare.

Consistency doesn’t mean rigidity. It means changes are deliberate, documented, and applied uniformly.

Alignment Errors Propagate

Temporal alignment is one of the most error-prone aspects of sensor data preparation.

Misalignment doesn’t produce dramatic failures. It produces subtle distortions: phase shifts, weakened correlations, apparent delays that models misinterpret as causality.

Because alignment errors are upstream, their effects propagate. Feature extraction, labeling, and model training all assume alignment is already correct.

Alignment must be established early and verified continuously.

Handling Missing Data

Missing data is unavoidable. How it’s handled has direct consequences for model behavior.

Simple filling strategies can work, but they encode assumptions about continuity and causality. Filling with future information may improve offline metrics while making models unusable in real-time. Aggressive interpolation may smooth over meaningful discontinuities.

An ML-ready pipeline makes missing-data handling explicit, consistent, and aligned with deployment context.

Preparing Data With Deployment in Mind

One of the most common failure sources is mismatch between training-time preparation and deployment reality.

For example:

- Training data normalized using global statistics unavailable in production

- Labels defined using smoothed signals that rely on future information

- Features depending on signals that are delayed or unreliable in real time

These mismatches, known as training-serving skew, are rarely obvious during development. They surface only when models are deployed.

Preparing data for ML means preparing it for the environment where the model will operate.

Traceability as Operational Requirement

As pipelines mature, questions arise:

- Why did this model behave differently last week?

- What changed in the data?

- Which preprocessing steps were applied?

Without traceability, these questions can’t be answered. Debugging becomes guesswork.

Knowing how data was transformed, in what order, with what parameters, is an operational requirement for maintaining ML systems.

Why One-Off Scripts Don’t Scale

Many pipelines begin as scripts written to solve immediate problems. This is natural and often necessary.

Scripts become infrastructure. And scripts tend to:

- Encode assumptions implicitly

- Drift as they’re modified

- Be difficult to version and audit

- Break silently when inputs change

ML readiness requires moving beyond scripts toward structured pipelines with clear stages, defined behavior, and repeatable outputs.

Best Practices for ML-Ready Sensor Data

- Treat preprocessing, harmonization, and labeling as interconnected stages. Changes in one affect the others.

- Enforce consistent order of operations. Document the sequence explicitly. Version your pipelines.

- Make assumptions explicit. Write down what filling strategy is used, what normalization statistics come from, what alignment tolerance is acceptable.

- Preserve lineage from raw signal to final dataset. Track which transformations were applied, when, with what parameters.

- Design for evolution. Build pipelines that can change without breaking comparability to earlier runs.

- Test for training-serving skew. Before deployment, verify that preprocessing produces identical outputs in training and serving environments.

Moving beyond one-off scripts toward structured pipelines makes complexity manageable.

What Comes Next

For teams operating ML systems in production, data readiness is often the limiting factor. Models can be retrained. Architectures can be changed. But fragile, opaque pipelines slow everything down.

Investing in ML readiness upstream reduces downstream cost: fewer surprises, faster iteration, more reliable deployment.

This article completes a progression: from preprocessing order, to harmonization, to labeling, to ML readiness as a pipeline property. For teams evaluating tooling, our overview of labeling platforms covers where existing solutions succeed and where gaps remain.