Manual labeling of 500 sensor recordings takes weeks. An ONNX classifier trained on 20 manually labeled examples can process the remaining 480 in minutes — with human review only on low-confidence predictions. In fusion energy diagnostics, this workflow delivers a measured >50X acceleration: from a handful of manually labeled tokamak shots per day to >200 consistently labeled shots per hour (Michoski et al., arXiv:2511.09725)

How do you autolabel sensor data with Python? Four steps:

- Manually label a seed set of representative events (20–50 per class minimum)

- Train a PyTorch classifier and export it to ONNX format

- Run the ONNX model across your full dataset with confidence scoring

- Queue low-confidence predictions for human review; accept high-confidence labels automatically

If your team has been labeling time-series and sensor data at scale and hitting the limits of manual annotation, the sensor data autolabeling pipeline is how you break through. This guide covers the full workflow — seed labels to ONNX deployment to active learning — with real examples from fusion energy diagnostics and robotics.

Before You Autolabel: Why Preprocessing Order Matters

Every step in this guide assumes your data has already been harmonized onto a common time grid. If you are still working with misaligned, multi-rate sensor streams, start with the data harmonization guide for multimodal time series before building an autolabeler.

The reason is not just practical convenience — it is mathematical. When heterogeneous diagnostics and simulation outputs are harmonized and fused into a coherent representation, the underlying state-space manifold that your classifier must learn becomes simpler: lower informational entropy, fewer spurious correlations from misalignment, and better alignment with actual physics. The result: a more compact model that generalizes better across campaigns, devices, and operating regimes (Michoski et al., arXiv:2511.09725).

One critical risk that most autolabeling guides ignore entirely: data leakage through preprocessing. If your gap-filling procedure interpolates across a disruption boundary, or if your normalization draws on post-event signal values, then labels produced by a classifier trained on that data inherit causal contamination. The classifier appears accurate — but only because it saw information that would not be available at prediction time.

The dFL framework mitigates this by allowing causal constraints to be imposed during labeling and preprocessing — restricting fill operations to past-only data, and explicitly tracking provenance so that analysts can audit whether any step introduces information from outside the causal horizon (arXiv:2511.09725, Section 4.1.3). The preprocessing order article covers operator sequencing in full detail.

Stage 1 — Manual Seed Labeling: How Many Examples Do You Need?

For statistical detectors (threshold, zero-crossing, inflection point): 0 manual labels required. These operate on mathematical signal properties, not learned patterns.

For ONNX/PyTorch classifiers: 20–50 labeled examples per class is the minimum viable starting point. At this volume, a 1D CNN or LSTM with 2–3 layers can learn basic event patterns — expect 70–80% precision on high-confidence predictions. For production-grade precision (90%+), target 100+ labels per class. Benchmarks are available on Papers With Code’s time-series classification leaderboard.

Why is the seed label problem structurally harder for sensor data than for images or text? Because expert annotations are expensive, diagnostic systems produce vastly more unlabeled data than any team can curate, and the events you care about — ELMs in tokamak plasmas, disruptions, servo misalignment in robotics — are rare compared to stable operating conditions.

This class imbalance skews classifiers toward the majority class unless you deliberately stratify your seed labels (arXiv:2511.09725, Section 2.1–2.2). Use precision, recall, and F1-score as your evaluation metrics rather than accuracy, which misleads on imbalanced data.

What matters more than volume: boundary coverage. Sensor events are not uniform. An ELM in a tokamak plasma shot looks different at onset than at peak than at decay. A servo misalignment in a robotics dataset [internal link → /labeling-robotics-sensor-data-manipulation-ml/] looks different at 5ms lag versus 50ms lag. Label 5 examples of each event subtype — 5 small ELMs, 5 large ELMs, 5 partial ELMs, 5 ELM-free transitions — for high boundary coverage rather than 100 labels of only large ELMs. Stratify across time, sensor configuration, and signal quality. The boundary ambiguity problem is covered in the guide to labeling time-series data at scale.

Stage 2 — Training the Classifier: ONNX vs. Statistical Detectors

Two paths for building a custom autolabeler plug-in:

Path A: Statistical Detectors (No Training Required)

Statistical detectors ship built into most time-series labeling tools [internal link → /time-series-labeling-tools-2026/] as baseline autolabelers:

- Threshold detectors — flag signal crossings above/below a value (temperature alarms, voltage spikes)

- Zero-crossing and inflection point detectors — oscillation detection, onset events, curvature changes

- Peak/valley detectors — heartbeat detection in ECG, pressure cycles

- Statistical anomaly detectors — z-score or ARIMA residual deviations for general anomaly flagging

The limitation: statistical detectors don’t learn. If your event is “the moment before a robot arm fails” — something a human recognizes but can’t reduce to a single threshold — you need a learned classifier.

Path B: ONNX Classifier (Learned Event Patterns)

For events requiring pattern recognition, train a classifier in PyTorch and export to ONNX format — the universal exchange format maintained by Microsoft, Meta, and AWS. An ONNX model runs in ONNX Runtime, TensorFlow Serving, TVM, or OpenVINO without rewriting code.

Export to ONNX — reference code:

import torch

import onnx

torch.onnx.export(

model, dummy_input, “event_classifier.onnx”,

input_names=[“signal”], output_names=[“class_probs”],

dynamic_axes={“signal”: {0: “batch”}, “class_probs”: {0: “batch”}}

)

onnx.checker.check_model(onnx.load(“event_classifier.onnx”))

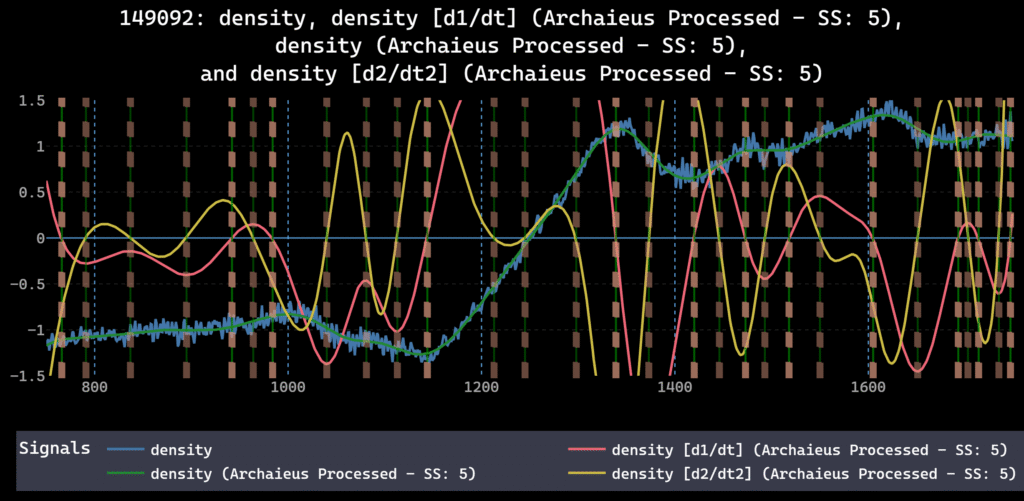

dFL’s Autolabel SDK accepts ONNX models as drop-in plug-ins. Configure input mapping, set confidence thresholds, and run bulk autolabeling. The SDK handles batching, confidence extraction, and provenance logging — every autolabel decision is tracked in the provenance DAG.

Preprocessing warning: smoothing can erase the features your classifier needs. Smoothing, like downsampling, generates information loss and is non-invertible. It alters both the autocovariances and cross-covariances that downstream classifiers rely on to distinguish event boundaries from noise.

Over-smoothing a noisy magnetic probe signal, for example, can attenuate the sharp onset signature of an ELM — the exact feature your ONNX classifier must detect. Choose smoothing parameters that remove sensor noise without erasing genuine transitions, instability onsets, or control interventions. The arXiv methodology paper (Section 4.3) covers smoothing’s effect on downstream inference in detail.

Stage 3 — Bulk Autolabeling and Confidence Thresholds

With a trained classifier, you run inference across your full dataset. Set three confidence tiers:

- Auto-accept (e.g., 0.95): accepted automatically, no human review

- Review band (e.g., 0.70–0.95): queued for human-in-the-loop review

- Auto-reject (below 0.70): logged but not applied

Start conservative and loosen as classifier performance improves across iterations.

Example: the DIII-D tokamak autolabeling workflow. At General Atomics, plasma physicists manually labeled ELMs and plasma regime transitions on ~20 shots — across 60+ channels at 1 kHz+.

The diagnostic signals included plasma current (ip), toroidal mode amplitudes from distributed magnetic probes (magnetics amplitudes), neutral beam injection power (pinj), pedestal width (wnt), normalized plasma beta (betan), line-averaged electron density, and filterscope channels (fs0*) measuring D-alpha and impurity emissions near the plasma edge. An ONNX plasma mode classifier bulk-labeled hundreds of additional shots. High-confidence predictions (above 0.92) were accepted automatically; borderline predictions were queued for physicist review. The provenance DAG tracked every step.

Result: a measured >50X acceleration in labeling throughput — from a handful of shots per day to >200 shots/hour, consistently labeled across all diagnostic channels. What previously took 2–3 weeks per shot campaign became a five-minute DAG review (Michoski et al., arXiv:2511.09725). The full workflow is also covered in the guide to labeling plasma diagnostic data for machine learning.

Active Learning: Closing the Loop

Active learning is not a convenience feature for sensor data — it is structurally necessary. Fusion diagnostics, robotic manipulation logs, additive manufacturing build records, and wearable ECG streams all share the same problem: the volume of unlabeled data vastly exceeds curated subsets, expert annotations are expensive, and the critical events you need to detect are rare (arXiv:2511.09725, Section 2.2). Active learning addresses this directly by routing the most informative unlabeled examples to human review first.

The active learning cycle:

- Autolabel the dataset with your current ONNX classifier

- Review low-confidence predictions — correct misclassifications

- Retrain on the expanded labeled set (seeds + corrections)

- Re-run bulk autolabeling with the improved model

- Repeat until the review queue shrinks to acceptable levels

After 2–3 cycles, even a seed set of 20–50 labels produces a reliable autolabeler for well-defined event classes. Active learning is also non-negotiable because sensor signals drift — a sensor calibrated in January produces different waveforms by June. The statistical properties of the data are nonstationary: mean, variance, and correlations change over time as equipment ages, configurations shift, and operating conditions evolve.

MLflow automates nightly retraining: pull corrections, retrain, export a versioned ONNX model, log to MLflow, update the autolabeler reference. dFL’s Autolabel SDK supports this natively. The MLflow sensor data integration guide [internal link → /mlflow-sensor-data-labeling-integration/] covers the full configuration.

Provenance: Every Autolabel Logged, Every Correction Tracked

A complete autolabel provenance record includes:

- Model artifact: ONNX filename or hash, training date, training set size, validation metrics

- Inference parameters: confidence thresholds, batch size, preprocessing version

- Per-label metadata: confidence score, classification (auto-accepted vs. human-corrected), reviewer ID

- Correction history: original prediction, corrected label, corrector, timestamp

- Pipeline version: the exact preprocessing DAG the classifier saw

This metadata exports as a JSON sidecar, in Parquet metadata, or as a browsable provenance DAG. Provenance is not just an audit trail — it preserves the per-signal uncertainties and masks that downstream data fusion methods need to weight sources correctly. Without this information, a classifier trained on one campaign cannot be reliably applied to another, because the noise characteristics and preprocessing decisions that shaped its training data are invisible (arXiv:2511.09725).

For teams working under FAIR data requirements, the sensor data provenance guide [internal link → /sensor-data-provenance-ml-pipelines/] covers the 8-item reproducibility checklist and ISO 8000 traceability requirements.

Real Example: Servo Misalignment Autolabeler (LeRobot SO-100)

The LeRobot SO-100 arm dataset — available through Hugging Face’s LeRobot framework contains commanded actions and observed joint states across hundreds of episodes.

The autolabeling workflow:

- Align commanded (100 Hz) and observed (irregular CAN bus) streams onto a common time grid [internal link → /data-harmonization-for-multimodal-time-series/]

- Manually label misalignment events on 20 episodes

- Train a 1D CNN on 200ms sliding windows; export to ONNX

- Bulk autolabel the remaining 480 episodes (auto-accept: 0.90, review: 0.65)

- Review 47 low-confidence predictions — correct 12 misclassifications

- Retrain and re-run; export final labeled dataset to Parquet with provenance JSON sidecar

One engineer. 20 minutes of manual labeling. Entire dataset autolabeled in hours. Full provenance for any collaborator to reproduce the result exactly. The full use case can be found on our use cases section. For the complete robotics workflow, see the guide to labeling robotics sensor data for manipulation ML [internal link → /labeling-robotics-sensor-data-manipulation-ml/].

What to Look for in a Sensor Data Autolabeling Platform

Four capabilities separate production-grade platforms from basic annotation tools:

- Plug-in autolabel SDK — bring your own ONNX or PyTorch model as a drop-in plug-in. MATLAB Signal Labeler supports custom MATLAB functions but not ONNX natively. Seeq and TrendMiner lack autolabel SDKs entirely. The MATLAB Signal Labeler comparison [internal link → /matlab-signal-labeler-vs-dfl/] covers SDK differences.

- Confidence scoring and review queues — set thresholds and route low-confidence predictions to human review automatically.

- Provenance tracking — every autolabel decision logged with model version, confidence, and timestamp. Non-negotiable for FAIR data compliance.

- MLflow or experiment tracking integration — log retraining runs, compare model versions, automate nightly updates.

FAQ

What formats does dFL accept for ONNX autolabeler input?

dFL’s Autolabel SDK [link → https://dfl.sophelio.io/documentation/autolabel-sdk] accepts any ONNX model with a defined input shape. Input preprocessing is configured in the SDK plug-in, not baked into the model.

How many manual labels do I need before autolabeling works?

For statistical detectors: 0. For ONNX classifiers: 20–50 labels per class minimum; 100+ for production-grade precision. Boundary coverage matters more than raw count. The time-series labeling scale guide [internal link → /labeling-how-to-label-time-series-and-sensor-data-at-scale/] covers seed label strategy.

Does autolabeling work for multi-class events?

Yes. The ONNX classifier outputs probabilities for each class; the max-probability class is assigned. Ensure 20–50 seed labels per class with boundary coverage.

How does active learning improve autolabeling over time?

Each cycle routes low-confidence predictions to human review, incorporates corrections, and retrains. After 2–3 iterations, precision on high-confidence predictions typically reaches 90%+ for well-defined event types. MLflow integration [external link → https://mlflow.org/] automates retraining and version tracking.

Can preprocessing choices invalidate my autolabeler?

Yes. If gap-filling or normalization draws on future values (information that would not be available at prediction time), the classifier inherits causal contamination and will overperform in testing but fail in production. Restrict fill operations to past-only data and audit your preprocessing DAG for causal leakage. The preprocessing order guide [internal link → /preprocessing-order-in-time-series-ml-pipelines/] covers this in detail.

Bring your own data to dFL

Plug your ONNX model into dFL’s Autolabel SDK